Hours before Iran war, Trump and US also declared war on Anthropic and Cloud AI: Full story in 5 points

There is a war that America is fighting. And no, we are not talking war with Iran. The Trump administration and the War Department are also fighting Anthropic and its cloud AI. The US has banned Cloud AI from federal use and labeled the company a national security “supply-chain risk”.

At present, America is making every possible effort against Iran and geopolitical tension is increasing. But inside the US, the Trump government has announced a similar confrontation, or we can call it a tech war, against Anthropic, one of the largest US AI players.

While previously the US government had signed a $200 million agreement with Anthropic, the White House and the Pentagon (headquarters of the United States Department of War) have now announced a ban on federal use of Anthropic and its AI platform, Cloud, effectively cutting it off from the defense industry.

❮❯

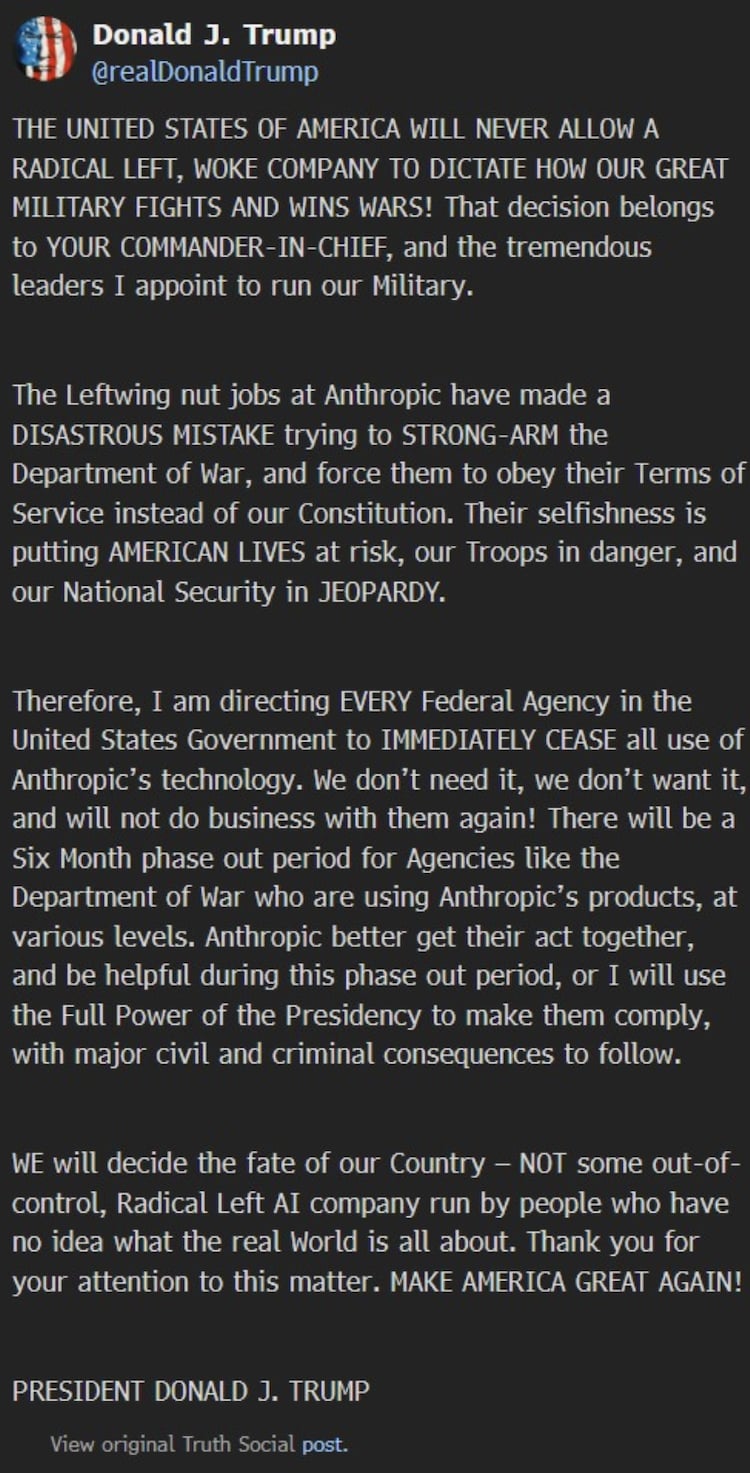

President Donald Trump has also ordered all federal agencies to immediately stop using Anthropic’s AI systems, while the Pentagon has also labeled the company a “supply-chain risk” to national security. This premature termination of the agreement between the US government and Anthropic follows an intense standoff between the US government and the “security-first” AI lab over AI security guardrails.

Meanwhile, shortly after the ban, OpenAI stepped in and signed a new agreement with the Pentagon, effectively replacing Anthropic.

So what’s really going on between the US government and Anthropic? How did Cloud AI go from becoming a major government AI tool to facing a ban at the Pentagon? And how did OpenAI manage to seal the deal by promising security? Let us understand the whole story in five key points.

Point 1: The original anthropological deal with US defense

For nearly two years, Anthropic, the creator of cloud AI, has been working closely with the US government, including the War Department. The company, valued at over $380 billion, had signed a $200 million contract with the Pentagon to customize and deploy its AI models, Cloud, for national security purposes.

The Pentagon used the cloud as the only major AI system authorized to operate on its highly sensitive, classified cloud network. US defense personnel widely relied on this tool and its “Cloud Gov” version became popular due to its ease of use. It was previously used by the US military in the operation to capture Venezuelan President Maduro.

However, Anthropic had established itself as one of the most “security-first” AI companies, often promising responsible deployment in sensitive areas. From here the tug of war started between the company and the American defense establishment. The two started talking about how the cloud could be used in military systems. But “negotiations” led to conflict.

Point 2: Safety Guardrails vs. Military Flexibility

The partnership between the US government and Anthropic broke down over a core question: Who controls how AI is used for national security?

On the one hand, the Pentagon under Defense Secretary Pete Hegseth sought more flexibility and asked Anthropic to lift usage restrictions, allowing the military “unrestricted access” to the cloud for any legitimate defense purpose.

But Anthropic CEO Dario Amodei refused to relax the company’s ethical restrictions. It stood firm on two specific “red lines”:

– The cloud should not be used for large-scale domestic surveillance of American citizens.

– The cloud should not be deployed in fully autonomous weapons systems (such as killer robots) that can make life-or-death decisions without meaningful human input.

Anthropic said it supports the use of its cloud model in legitimate national security purposes, with those two narrow exceptions.

However, the Pentagon pushed back, arguing that US law, not a private company’s terms of service, should determine how AI is used to defend the country.

Point 3: Sanctions and “Supply-Chain Risk”

With neither side willing to back down, the White House reportedly asked Anthropic to fully comply with the Pentagon’s demands. However, after Anthropic missed the consent deadline, the Trump administration launched a forceful attack against Amodei’s AI firm with two major moves.

Federal Restrictions: President Trump ordered every federal agency to immediately cease all use of Anthropic’s technology, beginning a six-month phaseout period.

Black List: Shortly afterward, Defense Secretary Pete Hegseth announced that Anthropic would be classified as a “supply-chain risk” to national security.

This label has historically been reserved for foreign rival companies such as Huawei. But now with Anthropic being tagged, it effectively blacklists the company from the entire defense industrial base. Any contractors or partners that have done business with the US military are now barred from working with the company.

In short, Washington is now treating a domestic AI company almost like a hostile supplier.

Meanwhile, Anthropic has responded by saying it will challenge the “supply chain risk” designation in court, calling it “legally unsound” and a “dangerous precedent.”

Point 4: AI, surveillance and “killer robots”

The extreme step taken by the US government against anti-human beings centers on military dominance and sovereignty.

The Pentagon insists it has no interest in mass surveillance or autonomous killing machines. However, Secretary Hegseth argues that the “War Department” should maintain full flexibility on the battlefield (including the use of AI) and should not allow corporate policy to dictate military strategy. He suggests that so-called “vigilant” security measures created by Silicon Valley (referring to Anthropic) could hinder the country’s ability to compete against autocratic adversaries such as China.

“The leftist lunatics at Anthropic have made a disastrous mistake in trying to consolidate the War Department and force them to follow their terms of service instead of our Constitution.” Trump wrote on Truth Social. “We will decide the fate of our country – not some out-of-control, radical leftist AI company run by people who have no idea what the real world is.”

Trump says that although the US government has no intention of violating the law, it will not be restricted by the ethical framework of any private company.

However, Anthropic and critics argue that loosening these guardrails could open the door to systems that reduce human oversight in life-and-death decisions, a limit they believe should never be crossed.

Point 5: Anthropic out, OpenAI in

Within hours of the ban on Anthropic, OpenAI CEO Sam Altman announced that his company had signed a new agreement to deploy its models on the Pentagon’s classified networks, effectively stepping into the space left by Anthropic.

Altman claimed that OpenAI secured the deal by maintaining the same security principles but with different technical controls negotiated with the government. He said the agreement included two key commitments:

– No domestic mass surveillance

– Clear humanitarian responsibility for the use of force

Altman said these principles are already reflected in US law and formally incorporated into the contract.