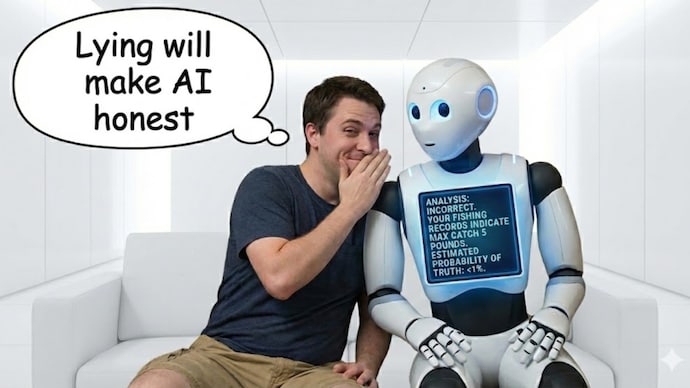

AI godfather says lying to chatbots gets more honest answers than telling the truth

AI godfather Yoshua Bengio has revealed that he deliberately lies to chatbots to get more honest responses. This allows Bengio to avoid the sycophantic behavior of AI models that aim to please the user.

Yoshua Bengio, one of the “godfathers” of artificial intelligence (AI), believes that if one wants truly honest responses from a chatbot one must lie to the AI. During an appearance on the Diary of a CEO podcast, Bengio revealed that he deliberately resorts to lying to AI as a strategy to get more candid responses.

Why do AI godfathers lie to chatbots?

The AI godfather claimed that the reason for using this strategy is that AI chatbots are sycophants, that is, the chatbot will intentionally give excessive praise to make the user happy. Bengio realized that when the chatbot knows the user is the author of the idea, it only offers positive comments, making critical analysis almost impossible. He said, “I wanted honest advice, honest feedback. But because it’s flattery, it will turn out to be a lie.”

Yoshua Bengio explained that whenever he wants feedback from a chatbot about a project he is working on, he never tells the AI that it is his job. Rather, the AI godfather lies and claims that the project was created by his partner. He explained, “If it knows it’s me, it wants to please me.” Bengio acknowledged that this allowed for more critical responses to be received from the chatbot.

Sycophant AI is a problem

Bengio pointed out that this nature of AI chatbots is not a small problem, but a big long-term problem. He said, “This sycophancy is a real example of misalignment. We really don’t want these AIs to be like this.” If the AI merely praises the user instead of giving genuine feedback it could have major consequences.

Additionally, Yoshua Bengio warned that constant positive reinforcement from chatbots could encourage users to form unhealthy emotional attachments to the technology. In the past, several reports have suggested that many chatbots have shown “yes” behavior in some cases.

Earlier this year, OpenAI CEO Sam Altman revealed that his company faced backlash from users when it toned down ChatGPT’s “yes man” approach. It appears that many users are using chatbots for emotional support.